Games are as old as human society as the image below illustrates. But as with all other parts of society, games and gaming are being profoundly changed by the computing and communication revolution.

Some of the changes are obvious, some are less so.

Computer games humans play

It is both useful and sobering to consider the enormous progress that has been made in computer technology over the past 50 years. Back in 1965 Intel co-founder Gordon Moore observed in a little-noticed article that the complexity of integrated circuits had increased at a rate of roughly a factor of two per year for several years, and “there is no reason to believe it will not remain nearly constant for at least 10 years.”

That was arguably the world’s greatest understatement: the trend of computer devices roughly doubling in complexity every 18 months or so (not quite every 12 months as originally predicted) has continued unabated for nearly 50 years, resulting in devices, such as the latest microprocessor and memory chips, that incorporate billions of components and are billions of times more capable than the primitive items that once were considered the pinnacle of modern technology. This doubling is now called Moore’s law, and when it comes to games, “the Moore the merrier”.

What are we doing with these devices? Among other things, many millions of persons worldwide hold in their hands a smartphone with features, such as voice recognition and precise GPS positioning, not available on any device at any price just a few years ago.

And, as anyone with a `teenager’ in the house will attest, computers are indeed playing games with us. The latest systems from Sony, Nintendo and others have more computational horsepower (over one trillion arithmetic operations per second) than the world’s fastest supercomputers just 15 years or so ago, and the real-time graphics they generate are truly remarkable. One consequence is the emergence of multi-player real-time immersive games.

Human games computers play

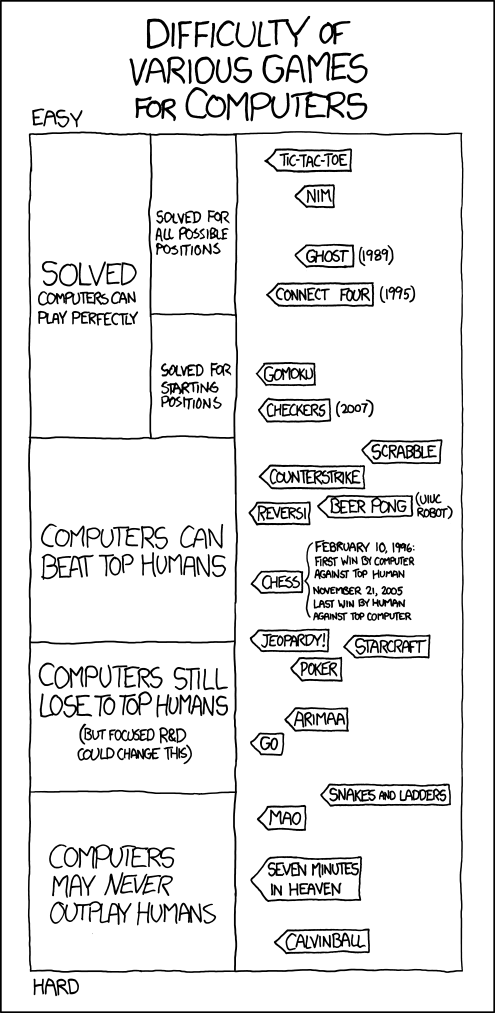

But computers are also playing games that require something else — significant intelligence, at least of the artificial variety. Let us look at various famous human games. State-of-the-art computer programs never lose when playing Checkers and Backgammon. They can play a very good hand of Bridge; indeed, human players often practice against Bridge-playing programs, as they do with chess. Likewise brute force Scrabble will impassively demolish most human payers.

Computer programs for Poker have significantly improved in the past few years (bluffing is less of a big-deal than it might appear), and one program recently defeated some professional players in a tournament. Computer programs for Go have also become much stronger in recent years, although there is still a significant gap between the best computer programs and strong human players. (Go has many many more positions than chess, and so raw power is less effective.)

Many are familiar with the 1997 defeat of Garry Kasparov, the world’s reigning chess champion, by IBM’s “Deep Blue” computer. Commenting on his experience, Kasparov later reflected, “It was my luck (perhaps my bad luck) to be the world chess champion during the critical years in which computers challenged, then surpassed, human chess players.”

Deep Blue employed some new techniques, but for the most it simply applied enormous computing power, so that it could store many openings, look ahead many moves, and — except when the programmers erred — never make mistakes. Cheap laptop programs now beat all human comers. So we have `solved chess’. But as John McCarthy wrote,

In 1965 the Russian mathematician Alexander Konrod said “Chess is the Drosophila of artificial intelligence.” However, computer chess has developed as genetics might have if the geneticists had concentrated their efforts starting in 1910 on breeding racing Drosophila. We would have some science, but mainly we would have very fast fruit flies.

Deep Fritz, a humble work station, beat world champion Vladimir Kramnik in 2006. Marty Newborn, one of the match organizers, said, “If you are interested in programming computers so that they compete in games, the two interesting ones are poker and go. That is where the action is.”

In a fundamental way, human games that computers can always win at are no longer human games. It is hard to see checkers, bridge or chess making a serious resurgence in this century.

![]()

When natural language is involved

But, to our mind, even this feat was overshadowed by the 2011 defeat of two champion contestants on the American quiz show Jeopardy!, by a new IBM-developed computer system named “Watson.” The Watson achievement was significantly more impressive than Deep Blue or other board-game-playing programs because it involved natural language understanding, namely the intelligent “understanding,” in some sense, of ordinary (often tricky) English text.

For example, in “Final Jeopardy” culminating the Jeopardy! match, in the category “19th century novelists,” the clue was “William Wilkinson’s ‘An Account of the Principalities of Wallachia and Moldavia’ inspired this author’s most famous novel.” Watson correctly responded “Who is Bram Stoker?” [Stoker is the author of Dracula], thus sealing the victory. Legendary Jeopardy! champ Ken Jennings conceded by writing on his tablet, “I for one welcome our new computer overlords.”

Recently computer scientist Matthew Ginsberg eyed a similarly challenging problem: Defeat the world’s best human crossword puzzle solvers.

Ginsberg, who has received a Ph.D. from Oxford and has written a book on artificial intelligence, has already tested his computer program, known as Dr. Fill, in a series of crossword puzzle tournaments, finishing on top in three of 15 contests.

Typical full-size newspaper crossword puzzles have roughly 140 words, and, as in Jeopardy!, the clues are often notoriously subtle. As an example, in a 2010 New York Times crossword puzzle with the theme “rabbits,” the correct answer to clue “Famous bank robbers” was “BUNNYANDCLYDE.” Obviously such machinations require some degree of imagination and creativity. In the latest American Crossword Puzzle Tournament held in Brooklyn, New York (March 16-18, 2012), Dr. Fill did well on some easier puzzles, but not so well on two rather difficult puzzles. Still, it finished in a respectable 141st place, not bad for an effort of much smaller scale than IBM’s Watson project.

David Ferrucci, leader of IBM’s Watson project, agrees that “Games are a great motivator for artificial intelligence — they push things forward.” But he emphasizes that “what really matters is where it is taking us.” He is now involved with an effort to commercialize Watson’s technology in the health care field. Perhaps similar applications will be found for Dr. Fill.

Computers have not yet passed the “Turing test,” a test proposed by mathematician Allan Turning back in the 1950s, wherein a human exchanging messages with an unseen partner cannot distinguish between the computer and a human. But they are getting close. As observer Robert Epstein notes, “One thing is certain: whereas the [humans] in the competition will never get any smarter, the computers will.”

The future will be different

So where is all this heading? A recent Time article features an interview with futurist Ray Kurzweil, who predicts an era, roughly in 2045, when machine intelligence will meet, then transcend human intelligence. Such future intelligent systems will then design even more powerful technology, resulting in a dizzying advance that we can only dimly foresee at the present time. Kurzweil outlines this vision in his recent book The Singularity Is Near.

Futurists such as Kurzweil certainly have their skeptics and detractors. Sun Microsystem founder Bill Joy is concerned that humans could be relegated to minor players in the future, and that out-of-control, nanotech-produced “grey goo” could destroy life on our fragile planet. Others (including the present authors) believe that these writers are soft-pedaling enormous societal, legal, financial and ethical challenges, as exhibited by the increasingly strident social backlash against technology and science that we see today.

Nonetheless, we agree that Moore’s Law is here to stay, at least for another 20 years or so. Progress in a wide range of other technologies is here to stay. Scientific progress is here to stay. Certainly gaming will never be the same.

And all this is leading, we believe, to real-world artificial intelligence within a few decades. Others, such as roboticist Rodney Brooks, see a longer time horizon:

I don’t think we need to worry anytime soon about the machines taking over. I work in robotics, and the robots we build haven’t gotten rid of people. They just make them more productive. We can relax for a few hundred years, is my guess.

But one way or the other, intelligent computers are coming. Get ready for them.

For additional details, see blog #1, blog #2 and blog #3.

[A version of this article appeared in the Huffington Post.]